|

Getting your Trinity Audio player ready...

|

Alert fatigue is one of the most persistent and costly problems in security operations – and it’s getting worse. According to Cybersecurity Insiders, 76% of SOC teams cite alert fatigue as a top operational challenge – and 73% report analyst burnout as a direct consequence of this fatigue. Yet despite growing budgets and headcount, the noise is only getting louder.

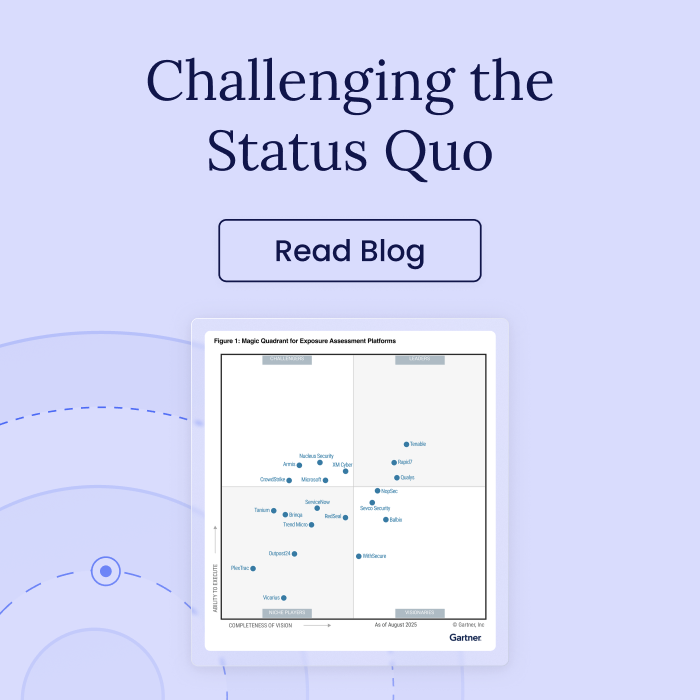

To uncover the root of why alert fatigue persists even as security teams keep investing more, I am grateful for the opportunity to sit down for a Webinar with Jonathan Nunez, Senior Director Analyst at Gartner. The webinar focuses on the structural problems driving SOC analyst burnout, why generic approaches make them worse, and what it actually takes to fix them.

Why Generic Approaches Keep Failing

Security teams today have no shortage of tools or data – SIEMs, EDRs, vulnerability scanners, exposure assessment platforms, you name it. The problem is: strategies built around those tools were built for one-size-fits-all coverage. Every detection rule, every playbook, every validation test is designed to work across any environment, for any organization. That generic approach creates three predictable problems:

- Generic threat detection. Broad detection rules promise wide coverage – and deliver high alert volumes with low fidelity. Every false positive costs time, money, and analyst attention. This leaves tension between breadth and efficiency – broad coverage overwhelms analysts with noise but narrowing coverage creates blind spots.

- Generic incident response. Standardized playbooks assume everyone follows the same steps. In practice, they rarely do. Without context, cross-team coordination breaks down, communication lags, and teams end up focused on containment rather than root cause. The same incidents recur because the underlying exposures never get addressed.

- Generic security control validation. Template-based testing checks boxes. It doesn’t account for the specific architecture, configurations, and business risks that make each environment unique. The result is a “test completed” report that gives stakeholders confidence the environment is secure – even when exploitable attack paths remain open.

What’s more, these three challenges amplify each other. Weak detection generates more incidents. Generic response leaves root causes intact. Validation that doesn’t reflect reality means teams never know where the real gaps are.

More Data Does Not Close the Gap

Most organizations already have all the security data they need – SIEMs, EDRs, vulnerability scanners, exposure assessment platforms and more generate signals from across the entire environment. The challenge isn’t collecting more; it’s making this data more accessible and more actionable.

That accessibility gap is costing defenders time they don’t have. Attackers are exploiting vulnerabilities in days – sometimes minutes – while defenders take months to detect and remediate. According to Mandiant, the mean time to exploit vulnerabilities has turned negative – exploitation now begins, on average, seven days before a patch is even available. On the defender side, mean time to remediate known exploited vulnerabilities still hovers around a jaw-dropping six months.

What separates an alert worth acting on from one that belongs in the triage queue? Context. And most SOC tools today just don’t provide it.

The Fix: Exposure Intelligence

The fix is in how teams leverage the data they already have – enriching alerts to produce what I call “exposure intelligence.” This changes both what analysts can see and – crucially – how fast they can act.

What does this look like? A generic alert says “PowerShell ran.” Exposure intelligence says “PowerShell ran on a machine with a validated direct path to a crown jewel asset using a known TTP.” The second version is immediately actionable. The first gets added to the triage queue.

Three dimensions make exposure intelligence work:

- Deep Attack Surface and Business Context: Understand every asset, identity (either human or non-human), their role and criticality within the organization and their current security posture. It’s helpful to see this in a topographical view, clearly outlining how assets and exposures interconnect to form attack paths.

- Reachability, Attack Complexity and Exploit Conditions: Understand if an attacker can reach a compromised asset, how difficult the path would be for an attacker to take, and continuously validate whether or not a wide range of exploit conditions are met. Because if something is risky but not reachable, or a compensating control is in place, it’s not a priority.

- Contextualized and Operational: Data and findings are only helpful if they’re set in context and made actionable for the teams consuming them. Moving from exposure data to exposure intelligence that’s meaningful for the SOC tends to require mapping findings to MITRE TTPs, surfacing business context and potential impact as a prioritization vector and pushing insights into their existing tools and workflows.

Those three dimensions also reveal choke points – areas where multiple attack paths intersect. Fixing a single exposure on a choke point can block multiple attack paths that compromise dozens of critical assets.

The Solution: Extend Your Existing Toolset

Your teams can realize value from exposure intelligence by simply feeding it into the systems analysts use every day. There’s no need to add another layer to the stack. The goal is to give analysts “context and prioritization, to optimize the way they work and enable them to be more proactive.”

What does this look like, in practice?

- SIEM: Exposure assessment platforms send context, not just logs – a login on a choke point gets prioritized immediately rather than buried in the queue

- EDR: Endpoint activity gets contextualized, flagging whether a suspicious process sits on a path to a crown jewel asset

- SOAR: Playbooks get tailored to the specific environment and its controls rather than running off generic templates

- IT Ops tools: Remediation teams receive exact guidance on which choke points to close, reducing the back-and-forth between security and IT

Where to Start

Alert fatigue is a context problem, not a headcount or budget problem. Security teams already have the data and the tools – what’s missing is the context that turns alerts into decisions. Without it, SOC analysts keep burning out on noise while real risk goes unaddressed.

So where do you start? Build a foundational exposure data set, map it to high-risk assets and choke points, and feed that context into the tools your analysts already use. Start small, report early wins, and build from there.

Burnout comes from noise, and the noise is fixable without adding to the stack. Fix it, and analysts can finally focus on what actually matters.

Sign up to the webinar to learn more.